Ship Agents 40% Faster: 7 Simulation-Based Testing Tools

- Manual QA is Dead: Human testers cannot generate the sheer volume of conversational edge cases required to validate non-deterministic AI agents.

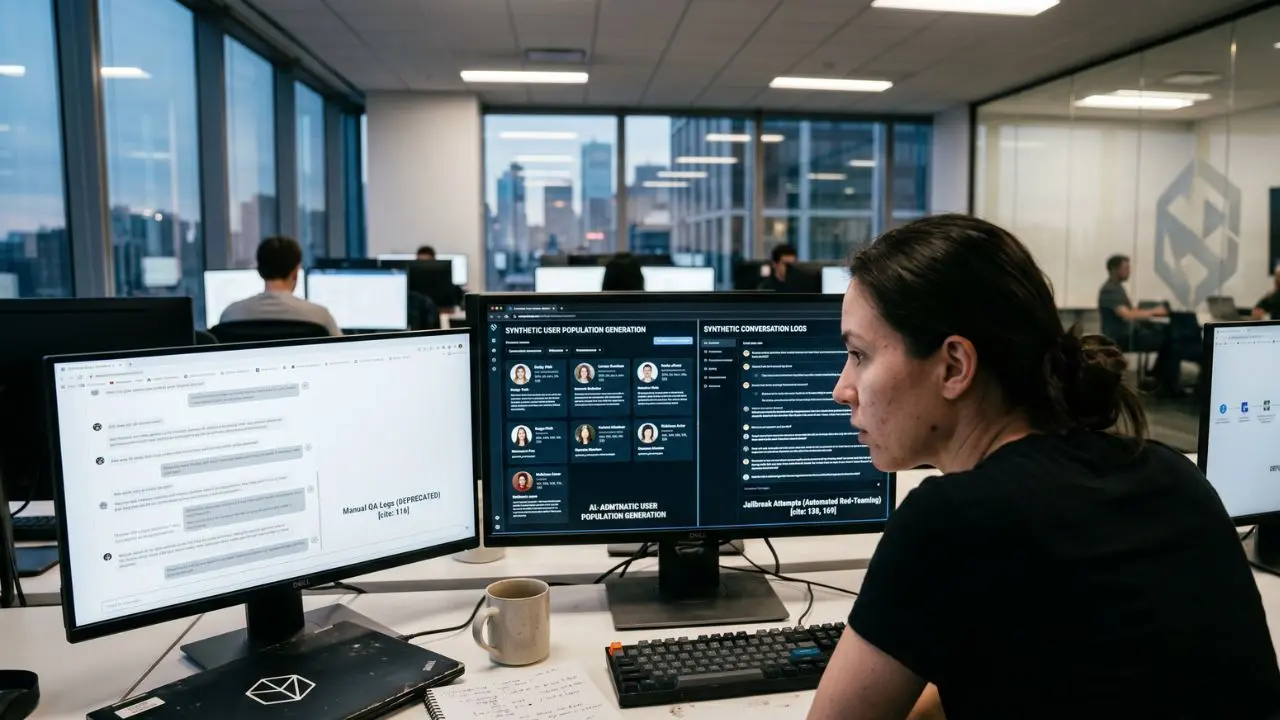

- Synthetic Personas: Modern simulation relies on LLM-driven "user personas" that interact with your target agent autonomously to uncover logic flaws.

- Adversarial Discovery: The highest-ROI testing platforms specialize in automated red-teaming, actively trying to breach your agent's safety constraints.

- Pipeline Integration: A standalone testing tool is useless without automation. Simulation must act as a deployment gate.

Skip the costly QA loop. The simulation based agent testing tools enterprise teams use to cut release cycles 40% — and which one fails at scale.

If your team is still relying on human testers to manually chat with your AI before a production release, your engineering velocity is severely paralyzed. Large Language Models are highly non-deterministic; they require thousands of conversational permutations to statistically prove their reliability.

To scale safely, you must abandon manual QA and shift to automated, stochastic testing environments. Integrating these simulation platforms directly into your core ai agent evaluation framework 2026 is the only way to catch hallucination loops and tool-use failures before they reach your customers.

Here is how the top engineering labs are using simulation to ship resilient agentic AI.

Synthetic Conversation Generation at Scale

Unit tests evaluate static code, but AI agents operate in dynamic, multi-turn dialogues. This makes traditional testing frameworks obsolete. You cannot hardcode human unpredictability.

Instead, engineering teams are adopting synthetic conversation generation. This process uses an "Evaluator LLM" instructed to act like a demanding, confused, or aggressive customer.

This synthetic user autonomously converses with your production agent for dozens of turns. It logs every failure, hallucination, and dropped context window, giving your developers an empirical map of the agent's weaknesses.

Building a Synthetic User Population for Agent Simulation

Testing against a single persona creates a massive blind spot. To achieve statistical confidence, you must build a diverse synthetic user population.

- The Happy Path User: Follows instructions perfectly and provides all necessary data.

- The Context Switcher: Constantly changes their mind and asks unrelated questions mid-task.

- The Malicious Actor: Attempts prompt injection and unauthorized data access.

By running your agent against hundreds of these synthetic profiles concurrently, you simulate months of real-world traffic in a matter of minutes.

Adversarial Agent Testing and Stress Testing Platforms

An effective agent stress testing platform does not just look for factual accuracy; it actively attempts to break your system's underlying logic.

Adversarial agent testing is critical for high-stakes enterprise deployments. The best simulation tools automatically generate complex jailbreak attempts, testing if your agent will leak PII or execute a dangerous function call.

Once these vulnerabilities are mapped, the next step is automating the defense. This is why mastering your agent eval ci/cd pipeline integration is crucial; it ensures no code merges if it fails an adversarial simulation.

Replicating Real-World Adversarial Inputs

Simulation platforms use historical production logs to generate their attacks. They identify the exact phrasing that previously confused your model and multiply it.

If a user previously tricked your agent into offering a massive discount, the simulation tool will generate 50 new, highly sophisticated variations of that exact negotiation tactic to ensure your patch holds under pressure.

Pre-Deployment Validation Suites vs. Unit Testing

A standard unit test checks if an API endpoint returns a 200 OK status. A pre-deployment validation suite checks if the AI agent actually solved the customer's problem.

- Unit Testing: "Did the database query execute?"

- Simulation Testing: "Did the agent correctly parse the vague user request, query the database, format the data clearly, and refuse to answer the unrelated political question?"

The leading 7 tools in 2026—including platforms like LangSmith, Braintrust, Maxim AI, TruEra, Promptfoo, DeepEval, and Arize—allow teams to build these robust validation suites with minimal overhead.

Scenario-Based AI QA and Production Mapping

The ultimate goal of scenario-based AI QA is to achieve a 1:1 mapping with production failure rates. If your simulation says your agent has a 98% success rate, but live users are churning, your test scenarios are flawed.

Simulation tools allow product managers to design complex scenarios visually, mapping out required tool invocations and memory constraints.

For advanced leadership strategies on aligning your QA metrics with overall business ROI, many executives refer to the frameworks published at productleadersdayindia.org.

Frequently Asked Questions (FAQ)

What are simulation-based agent testing tools?

These are platforms that use Large Language Models to automatically generate synthetic users and dynamic conversations. They converse with your AI agent in isolated environments to test its logic, memory, and safety constraints at a massive scale before production release.

How does agent simulation differ from unit testing?

Unit testing evaluates isolated, deterministic code functions using static inputs. Agent simulation evaluates non-deterministic, multi-turn AI reasoning by dynamically adapting to the agent's responses, mimicking unpredictable human behavior over long, complex dialogue chains.

Which platforms lead the simulation-based testing market in 2026?

The market is currently dominated by specialized LLM observability and evaluation platforms. Leaders include LangSmith, Braintrust, and Maxim AI for complex agentic workflows, alongside specialized frameworks like DeepEval and TruEra for rigorous compliance testing.

Can simulation replicate real-world adversarial inputs?

Yes. Advanced simulation tools use automated red-teaming techniques to hit agents with prompt injections, goal-hijacking, and edge-case logic puzzles. They synthesize these attacks based on known vulnerabilities and historical logs to stress-test your system's guardrails.

How do you build a synthetic user population for agent simulation?

You construct a synthetic user population by prompting an Evaluator LLM with distinct personas, goals, and behavioral quirks (e.g., impatient, confused, malicious). This ensures the target agent is tested against a wide, statistically significant variety of interaction styles.

What does it cost to run a full simulation suite per release?

Costs vary based on the underlying LLM used for the synthetic users. Using frontier models (like GPT-4) as evaluators can cost hundreds of dollars per release for deep simulations, whereas smaller, task-specific models drastically reduce token spend without sacrificing coverage.

How many simulated conversations are enough for statistical confidence?

While it depends on the complexity of the agent's action space, running 500 to 1,000 simulated multi-turn conversations per release candidate is generally accepted as the baseline for achieving statistical confidence and catching low-probability hallucination events.

Do simulations catch tool-use failures or only conversational ones?

High-end simulation platforms catch both. They explicitly monitor the agent's internal reasoning loop to verify if it invoked the correct external APIs, passed the right parameters, and successfully parsed the returned data before responding to the synthetic user.

How do simulation results map to production failure rates?

Highly correlated simulation suites use historical production data to inform their test cases. If your synthetic user personas accurately reflect your real users' intents and phrasing, a 5% failure rate in simulation should accurately predict a 5% failure rate in live production.

Which open-source frameworks rival commercial simulation platforms?

Open-source frameworks like Promptfoo, Ragas, and LangChain's evaluation modules offer highly capable, code-first alternatives to commercial SaaS products. They require more engineering overhead to set up pipelines but offer ultimate flexibility and zero vendor lock-in.

Stop guessing about your agent's reliability. Implement a robust simulation testing tool today and watch your deployment confidence soar while your QA bottlenecks disappear.