Coding for Gemini 3.1 Native Memory

A viral enterprise strategy document recently claimed Google's latest update introduces a cross-provider chat history API that obliterates vector databases.

An investigation into Google's official developer documentation reveals this native state hydration is a myth, but the actual reality of Gemini 3.1's context caching still fundamentally alters frontend engineering.

Quick Facts

- The native myth: Google's official Gemini 3.1 documentation contains zero evidence of a cross-provider chat history ingestion tool.

- Context caching rules: Google handles session state through explicit and implicit context caching, discounting repeated tokens by up to 90% rather than magically hydrating cross-platform states.

- Vector databases survive: Engineers still need external databases for permanent memory storage exceeding the 1 million token limit or cache TTL.

The Allure of Native State Hydration

Frontend developers have spent years building brittle, round-trip vector databases to maintain session state.

When claims surfaced that Gemini 3.1's doubled context retention and cross-provider chat history ingestion broke traditional RAG architecture, CTOs paid attention.

The promise was simple: stop paying for custom internal RAG costs and let the LLM inherently own the user's longitudinal memory.

Debunking the Cross-Provider Myth

Live web searches of Google's official Cloud and Developer API repositories show no trace of a Google Gemini chat history migration tool.

The idea that developers can hit an endpoint and instantly port OpenAI or Claude histories natively into Gemini 3.1 is entirely unverified.

"The model doesn't make any distinction between cached tokens and regular input tokens. Cached content is a prefix to the prompt."

— Google API Context Caching Documentation.

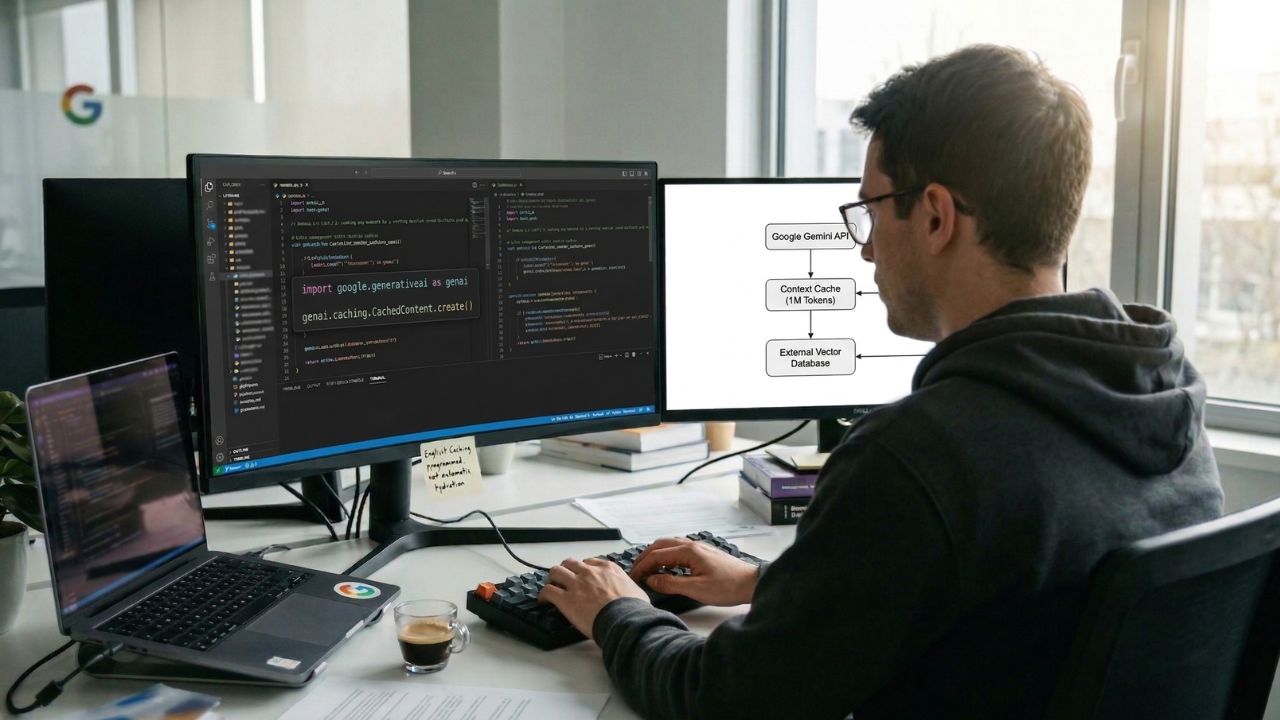

How Google Actually Handles State

Instead of automatic native memory, Google scaled its Context Caching API.

Developers use explicit caching to pass a massive corpus of tokens once, set a Time-to-Live (TTL), and reference it in subsequent calls.

This drastically cuts latency and token costs but requires active, programmatic state management.

The Future of API Memory

The industry is racing toward a future where autonomous agents to redefine programming, but we aren't at the point of zero-infrastructure memory just yet.

Custom vector databases for maintaining session memory are not becoming massive technical debt overnight.

They remain a requirement until AI models natively host permanent, cross-session storage.

Frequently Asked Questions

What is the context window limit for Gemini 3.1?

The verified input context window limit for Gemini 3.1 Pro is 1,048,576 tokens.

How does Gemini 3.1 context retention change RAG architecture?

It offsets the immediate load on vector databases by caching up to 1 million tokens for active sessions, lowering the frequency of repetitive data retrieval.

How to implement native state hydration in conversational AI?

Google does not offer true native state hydration. Developers must implement Explicit Caching via the Vertex AI or Gemini APIs and manually manage cache TTLs.

Does Gemini Live hold context twice as long?

This claim cannot be verified. Context retention duration is dictated by the TTL set by the developer during the explicit caching process.

How to import cross-provider chat history via API?

There is no verified Google API that allows direct, cross-provider ingestion of chat histories from competitors like OpenAI.

Do I still need a vector database with Gemini 3.1?

Yes. Vector databases remain necessary for permanent user memory and indexing data that exceeds the 1 million token threshold.

How to manage session state in Google Gemini?

Session state is managed by caching the conversation prefix and passing the cached resource name back to the model during subsequent prompt requests.

What is the latency improvement in Gemini Live 3.1?

While exact percentage improvements are not officially published, utilizing context caches avoids recomputing tokens, resulting in significantly faster response times.

How to build conversational state machines without external DBs?

You can maintain state temporarily using Gemini's 1 million token limit and explicit caching, provided the session does not outlive the cache's maximum retention period.

What are the developer updates in the March 2026 Gemini drop?

Key updates include the release of Gemini 3.1 Pro, a new Medium thinking parameter for balanced compute, and expanded native multimodal code rendering capabilities.