The Hidden Cloud Tax of Building AI Study Tools

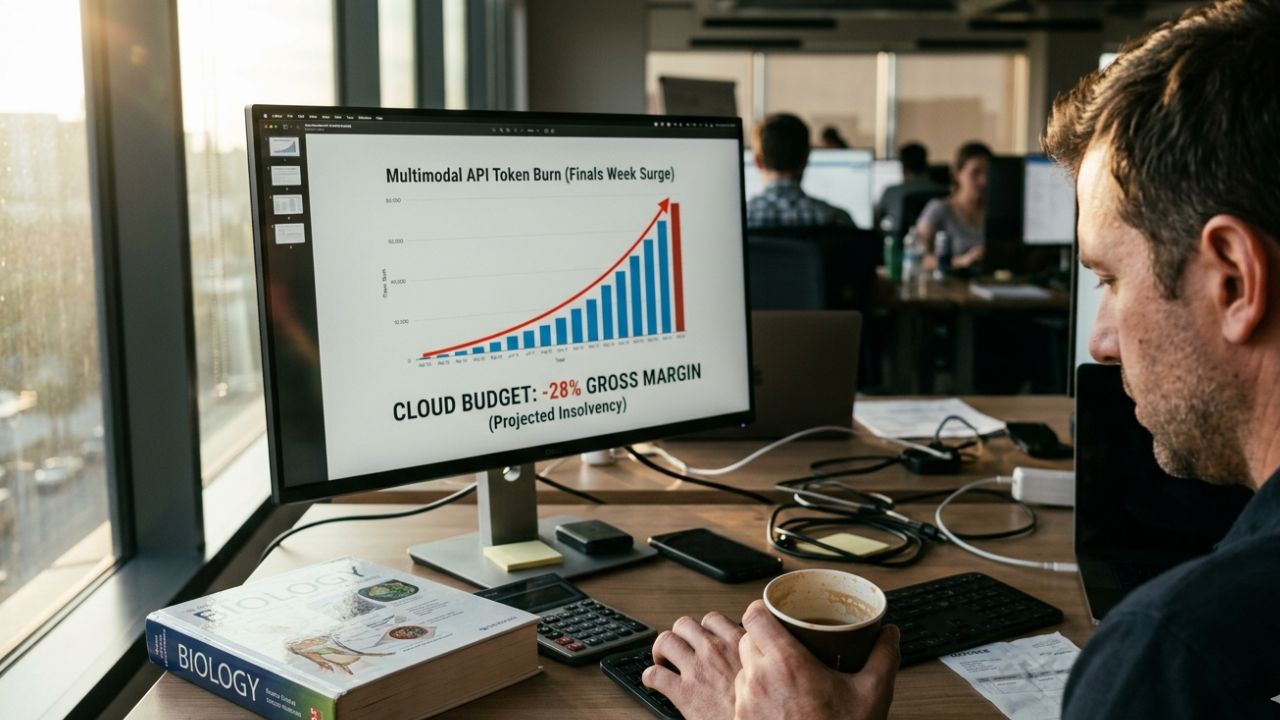

EdTech CTOs are cheering the AI tutor boom, but 80% are quietly bankrupting their cloud budgets. Replicating Gemini’s 6 study capabilities is plunging SaaS providers into a massive, hidden cloud compute trap.

Following Google’s Gemini AI study features update, the consumer expectation is that educational software must instantly process massive textbook PDFs, generate quizzes on the fly, and provide multimodal feedback natively. Students now assume this is standard functionality.

However, allowing unconstrained multimodal inputs for millions of students—particularly during the synchronized traffic surge of "finals week"—will absolutely destroy SaaS unit economics if token burn and API infrastructure are not aggressively throttled and cached.

The Multimodal Trap: Calculating the True Cost of PDF Ingestion

When engineering teams built early text-based chatbots, the cost parameters were predictable. Text tokens are incredibly cheap, allowing product managers to offer generous free tiers. That mathematical safety net is now gone.

Multimodal document ingestion introduces a completely different financial paradigm. When a student uploads a 300-page biology textbook containing complex diagrams, high-resolution microscope imagery, and intricate charts, the LLM isn't just reading text. It is processing massive visual arrays into the context window.

Processing a single image token can cost exponentially more compute power than a text token. If a student prompts the AI to "explain the diagrams in Chapter 4," the API call payload size spikes dramatically. Multiply this interaction by a user base of 500,000 students conducting daily study sessions, and the monthly API bill can escalate from tens of thousands to millions of dollars overnight.

CTOs must realize that multimodal capabilities are not a simple feature toggle. They represent a fundamental shift in how cost of goods sold (COGS) is calculated for digital educational products. Offering unlimited multimodal uploads on a $9.99/month student subscription is a guaranteed path to negative gross margins.

FinOps for EdTech: Surviving 'Finals Week' Server Spikes

The EdTech industry is uniquely cursed with extreme seasonality. Traffic doesn't grow linearly; it explodes during midterm and final exam weeks. For a traditional application, you simply spin up more AWS or Azure instances to handle the load.

But when your application relies heavily on third-party LLM APIs (like OpenAI, Anthropic, or Google Cloud), auto-scaling your own servers is only half the battle. You are simultaneously sending a DDoS-level volume of requests to a paid API endpoint.

Surviving these spikes requires rigorous Financial Operations (FinOps) governance. Engineering teams must implement hard concurrency limits, dynamic degradation of service (e.g., falling back to a cheaper, faster model like Haiku or Gemini Flash during peak hours), and strict rate-limiting per user account based on their subscription tier.

Implementing Semantic Caching for Common Student Questions

The most egregious waste of an AI cloud budget occurs when an LLM is forced to repeatedly generate answers for the exact same question. During an Introduction to Psychology midterm, thousands of students will invariably ask the AI tutor to "Explain classical conditioning."

Forward-thinking CTOs are deploying semantic caching layers, such as Redis combined with vector similarity search. When a student asks a question, the system first converts the query into a lightweight vector embedding.

It then checks the cache. If a previous student asked a semantically similar question (e.g., "What is Pavlovian conditioning?"), the system bypasses the expensive LLM API call entirely and instantly serves the cached answer.

Implementing a robust semantic cache with a 95% similarity threshold can intercept up to 40% of routine queries during exam week, dramatically slashing API costs while improving response latency to near-zero.

Throttling Agentic Workflows and Context Windows

As EdTech apps evolve from simple chatbots to autonomous agents, the cost complexity increases. Agentic workflows often involve the AI "thinking" in loops—querying a database, evaluating the result, refining the search, and finally generating a response.

Each iteration in this loop consumes tokens. If an AI tutor gets stuck in a hallucination loop trying to solve a complex calculus problem, it can burn through massive context windows rapidly.

Developers must impose strict "max_tokens" and "max_iterations" limits on agentic backend processes. Furthermore, the context window must be ruthlessly pruned. Instead of passing the entire chat history back to the API with every new message, the system should actively summarize older parts of the conversation and drop irrelevant context to keep the payload lightweight.

Defending SaaS Unit Economics Against Free Big Tech Alternatives

The existential threat remains: Google is offering advanced study tools for free within Gemini. How does a paid EdTech platform survive when its core features are commoditized?

The answer lies in pivoting away from generic knowledge retrieval and leaning heavily into proprietary data and specialized workflows. An EdTech platform must offer value that Gemini cannot access—such as deep integration with a university's specific grading rubrics, closed-loop accreditation tracking, or highly specialized vocational simulations.

This is a broader architectural challenge facing the entire software industry. Just as entertainment platforms are currently struggling with managing massive multimodal inference costs at scale, EdTech leaders must recognize that survival depends on hyper-efficiency.

By implementing strict FinOps governance, leveraging semantic caching, and carefully throttling multimodal context windows, CTOs can protect their SaaS unit economics and build AI tutors that are both highly intelligent and financially viable.

Frequently Asked Questions (FAQs)

How much do multimodal AI APIs cost?

Multimodal API costs are calculated based on token usage, but image processing is significantly more expensive than text. A single high-resolution image or PDF page can consume hundreds to thousands of tokens. When thousands of students upload complex materials simultaneously, the costs can scale exponentially compared to legacy text-only chat systems, severely impacting gross margins.

How to reduce LLM token usage in EdTech apps?

Engineers must employ aggressive context management strategies. This includes using vector databases to retrieve only the most relevant text chunks (RAG), actively summarizing and truncating older chat history before making API calls, and enforcing strict maximum token limits on both input prompts and generated responses.

What is the true ROI of custom AI tutors?

The true ROI of a custom AI tutor is heavily dependent on execution. While it can drive massive user engagement and subscription upgrades, the backend API costs can quickly erode profits if unmanaged. Positive ROI is only achieved when the increase in student retention and premium tier conversions outpaces the underlying token burn rate.

How to use semantic caching for student questions?

Semantic caching involves converting incoming student queries into vector embeddings and storing them in a high-speed database like Redis. Before calling an expensive LLM, the system checks if a semantically similar question was recently asked and answered. If a match is found, the cached response is served instantly, bypassing the API cost entirely.

Why are EdTech cloud compute costs rising?

Costs are rising because student expectations have shifted from static text retrieval to dynamic, multimodal generative AI. Generating personalized practice quizzes, parsing massive textbook PDFs in real-time, and running autonomous agentic grading loops require significantly more processing power and expensive third-party API calls than traditional database hosting.