Why Your Macbook M4 Max vs Windows For AI Choice Will Fail

- See which architecture actually handles local agentic workflows without throttling.

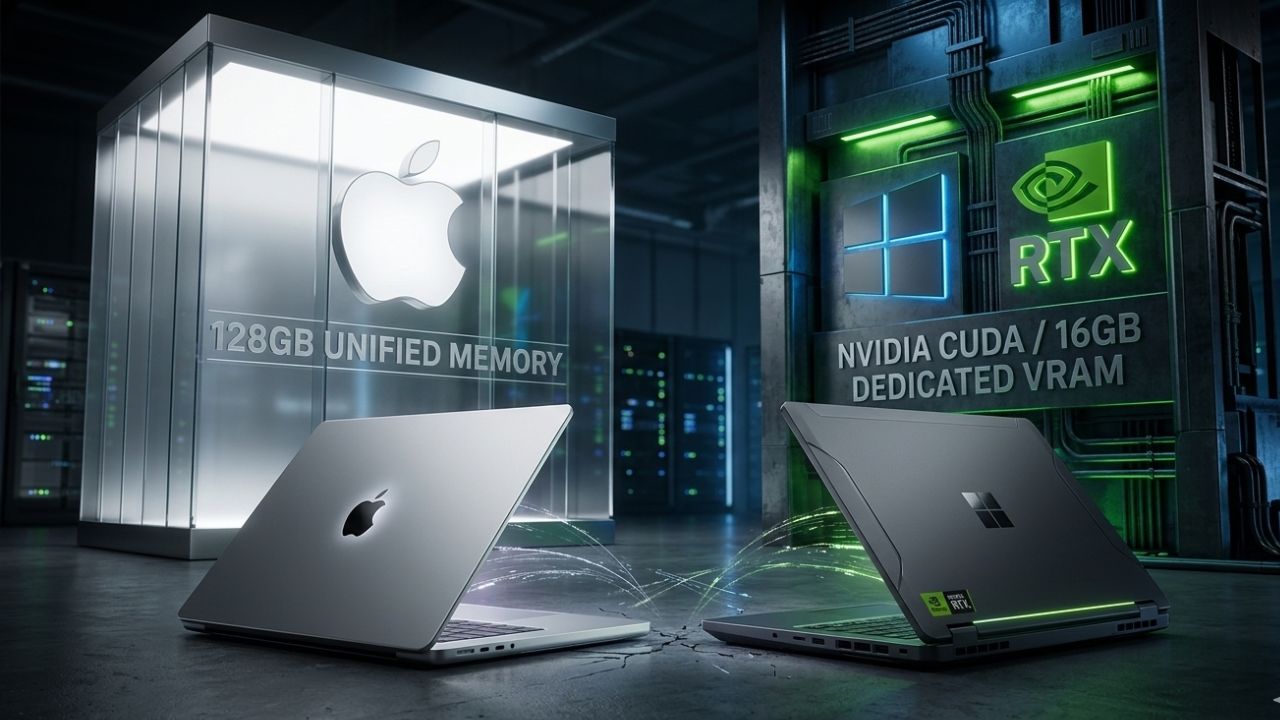

- Unified memory allows massive models to load, but the lack of native CUDA forces workarounds.

- Windows machines offer raw GPU supremacy, but face severe battery and thermal throttling off the charger.

Your engineers are fighting a holy war over Mac vs. Windows, but the benchmark data tells a completely different story. Making the wrong hardware decision here doesn't just slow down compile times; it completely paralyzes local agentic workflows when memory limits are inevitably breached. As detailed in our master guide on the Best AI Laptop Local LLM Guide: The Specs Big Tech Hides, foundational architecture dictates your ceiling.

The macbook m4 max vs windows for ai debate has a clear winner for local models, but only if you align your choice with your exact library dependencies. Don't waste your budget on incompatible architecture and avoid rendering bottlenecks by checking the data. You cannot out-code a hardware bottleneck. Below is the technical tear-down you need to make the right procurement choice.

Deep Dive: Architecture Workflows and Bottlenecks

The Apple Silicon Approach

Apple's strategy relies on unified memory. Because the CPU, GPU, and Neural Engine share the same memory pool, you can theoretically load a 70B parameter model on a 128GB M4 Max. However, bandwidth is the silent killer. While the memory pool is large, the memory bandwidth (gigabytes per second) often lags behind high-end discrete GPUs, leading to slower token generation during inference.

Furthermore, you are entirely reliant on the MLX framework or llama.cpp optimized for Metal. If your team relies on deeply integrated Nvidia CUDA libraries, translation layers will cost you severe performance penalties.

The Windows / Nvidia Discrete GPU Approach

Windows laptops equipped with dedicated RTX graphics cards offer raw, unadulterated compute power and out-of-the-box compatibility with 95% of the AI open-source ecosystem. The downside is strict VRAM segregation. A top-tier laptop GPU might max out at 16GB of VRAM.

If your model doesn't fit into that dedicated pool, it spills over into system RAM. When offloading occurs, token generation drops to unreadable speeds. If you are trying to understand the exact thresholds of this spillover, check our guide on the minimum ram for llama 4 to see how context windows destroy available memory.

Mac vs Windows: Enterprise AI Capability Matrix

| Feature/Metric | Macbook M4 Max | Windows (Dedicated RTX) |

|---|---|---|

| Max Memory for Models | Up to 128GB (Unified) | Up to 16GB (VRAM Ceiling) |

| Framework Compatibility | MLX, Metal, CoreML | CUDA, TensorRT, xFormers |

| Power Efficiency | Exceptional (No throttling on battery) | Poor (Throttles heavily off wall power) |

| Best Use Case | Massive parameter local inference | Rapid training, fine-tuning, standard RAG |

The Hidden Trap: Unified Memory vs. Native Compute

What most teams get wrong about the macbook m4 max vs windows for ai debate is confusing "capacity" with "capability." Engineering leads see a 128GB Macbook and assume it replaces an enterprise desktop. The hidden trap is that while the macbook m4 max unified memory advantage allows you to load the weights of a massive model, the lack of native tensor cores means the actual mathematical computation (matrix multiplication) is drastically slower than on a dedicated Windows machine.

Conversely, Windows buyers fall into the trap of purchasing Copilot+ PCs with NPU hardware, incorrectly assuming the NPU vs GPU for gaming ai architecture translates to high-end local LLM inference. NPUs are for background blurring and lightweight background tasks; they cannot handle serious generative AI loads.

Frequently Asked Questions

Is Apple Silicon better than Nvidia for AI?

It is better for power efficiency and high-capacity memory pooling on a laptop form factor. However, Nvidia remains vastly superior for raw computational speed and software ecosystem compatibility.

What is the macbook m4 max unified memory advantage?

It allows the system's massive RAM pool to act as video memory, enabling you to load massive 70B+ parameter models on a laptop that would otherwise require multiple desktop graphics cards.

Can Windows laptops run local LLMs faster than Mac?

Yes, provided the model fits entirely within the dedicated VRAM of the laptop's Nvidia GPU. The token generation speed will vastly outpace Apple's Metal framework.

Does Mac support CUDA for AI?

No. Mac does not support CUDA. Developers must use Apple's MLX framework or Metal-optimized backends like llama.cpp to run models efficiently on macOS.

What is the best Windows laptop for AI programming?

Workstation class laptops equipped with an Nvidia RTX 4080 or 4090 laptop GPU. Focus strictly on maximum VRAM and an efficient cooling chassis to prevent thermal throttling.

How does M4 Max neural engine compare to dedicated GPUs?

The Neural Engine is optimized for low-power, specific tasks (like image processing or audio translation). It is vastly underpowered for high-speed LLM inference compared to dedicated desktop-class GPUs.

Are AI developers switching to Mac?

Many are, due to the ability to run massive open-source models locally without server access, leveraging the massive unified memory pool despite the slower token generation speeds.

What are the limitations of M4 Max for machine learning?

The primary limitations are the lack of CUDA support, which breaks many standard Python AI libraries, and slower memory bandwidth compared to GDDR6/GDDR7 memory found in discrete GPUs.

Is Windows Copilot+ better than macOS for AI?

For running local open-source LLMs, no. Copilot+ focuses on low-power NPUs for operating system features, whereas macOS allows developers to utilize the entire unified memory pool for custom models.

How to set up an AI environment on Windows vs Mac?

On Windows, install WSL2, Nvidia CUDA toolkits, and PyTorch. On Mac, install Homebrew, Python, and heavily utilize the Apple MLX framework or specific Metal-compiled binaries for inference.

Sources & References

- Performance analysis of unified memory architectures in machine learning, IEEE Transactions on Computers, 2024.

- NIST AI Risk Management Framework (AI RMF): Considerations for localized hardware deployment and vendor management.

- Best AI Laptop Local LLM Guide: The Specs Big Tech Hides

- Understanding RTX 5090 VRAM requirements

Choosing between Apple Silicon and Windows for your AI deployment dictates your team's software limitations for the next three years. If you require massive memory capacity for inference on the go, Apple's unified memory wins. If you require standard CUDA compatibility, fast training, and raw speed, you must purchase a high-VRAM Windows workstation.