RTX 5090 VRAM Requirements: 5 Steps to Cut Hardware Costs

- Guessing your RTX 5090 VRAM requirements will bottleneck your AI agents and waste budget.

- You must learn the exact memory thresholds for local deployment to prevent system crashes.

- Proper provisioning ensures your local deployment specs align with ISO/IEC 5259-2 regarding data quality for AI hardware infrastructure.

Guessing your AI hardware thresholds is a massive risk; under-provisioning your GPU memory is the fastest way to kill your engineering team's velocity. Crashing models, out-of-memory (OOM) errors, and bottlenecked agentic workflows will quickly drain your budget. In the first 100 words of your deployment strategy, you must determine if you are buying a best laptop for local llm or a dedicated workstation.

Stop guessing and check the hard data on exact VRAM thresholds for local models. I understand the pressure to get these procurement decisions right without overspending. As detailed in our master guide for selecting a laptop for local llm deployment, grounding your infrastructure choices in strict technical realities is the only way to succeed.

The Hidden Trap: What Most Teams Get Wrong About High-End GPUs

Many engineering leads assume that simply throwing the newest flagship GPU at a problem will resolve all local deployment bottlenecks. This is a critical misconception. While anticipation for future architectures like a theoretical gpu 6090 continues to build, the reality is that raw compute power cannot overcome strict memory limitations.

When high-end GPUs lack the necessary VRAM to hold model weights and the KV cache simultaneously, the system is forced to offload data to system RAM. This triggers catastrophic latency. You aren't just losing speed; you are functionally breaking agentic workflows that rely on rapid, successive inferences.

Before you commit your entire hardware budget to desktop-class cards, you must also evaluate lateral options. Our benchmark data heavily contrasts this with mobile architectures; check out our macbook m4 max vs windows for ai comparison to see how unified memory changes the equation entirely.

5 Steps to Cut Hardware Costs and Optimize VRAM

Step 1: Calculate the Exact Parameter-to-VRAM Baseline

Do not rely on vendor minimums. To determine the absolute baseline VRAM required just to load the weights of a quantized model, you must use rigorous mathematical modeling rather than guesswork.

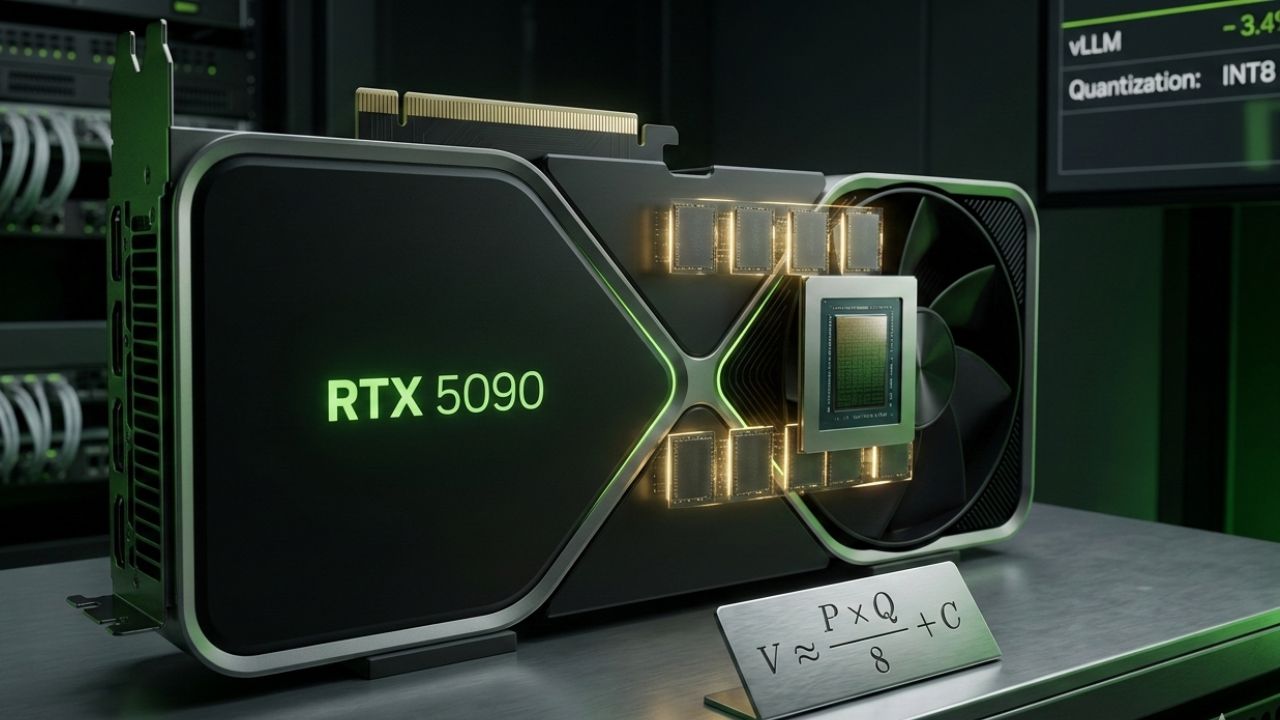

If you want to estimate the memory footprint in gigabytes for quantized inference, you can use the formula $V \approx \frac{P \times Q}{8} + C$, where $P$ is parameters in billions, $Q$ is the quantization precision in bits, and $C$ is the context window overhead.

Step 2: Provision for the KV Cache

The KV (Key-Value) cache expands dynamically based on your context window and batch size. If your team is running heavy RAG (Retrieval-Augmented Generation) pipelines, your RTX 5090 VRAM requirements will spike dramatically.

Always allocate a 20-30% VRAM buffer specifically for context handling. Expert Insight: Stop Crashing – Learn the exact memory thresholds for local deployment. Attempting to run a highly unoptimized local model with maximum context on insufficient VRAM will instantly crash the application.

Step 3: Implement Aggressive Quantization

You do not need full FP16 precision for every production task. Leveraging INT8 or 4-bit quantization methods (like GGUF or AWQ) drastically lowers your memory footprint. This is the single most effective way to cut hardware costs without sacrificing noticeable output quality on high-end GPUs.

Step 4: Evaluate NVLink and Multi-GPU Topologies

For enterprise deployments aiming beyond 70B parameter models, a single card will not suffice. Assess whether your workload requires high-bandwidth GPU-to-GPU communication. Understanding the necessity of NVLink bridges can save you from purchasing hardware that physically cannot pool memory effectively.

Step 5: Secure Appropriate Power and Cooling

High-performance AI hardware draws massive wattage. Failing to account for transient power spikes will cause unpredictable system reboots. Ensure your local deployment specs include ATX 3.0 compliant power supplies with native 12VHPWR connectors to maintain stable tensor core operations.

RTX 5090 vs Enterprise VRAM Usage

| Model Size | Quantization | Est. Minimum VRAM | Risk Level |

|---|---|---|---|

| 8B - 14B | FP16 | 16GB - 24GB | Low |

| 32B - 35B | 4-bit (GGUF) | 20GB - 24GB | Moderate |

| 70B - 72B | 4-bit (EXL2) | 36GB - 48GB | High (Requires Multi-GPU) |

Frequently Asked Questions (FAQ)

How much VRAM does the RTX 5090 have?

While exact final specifications can vary by manufacturer variants, flagship enterprise-grade architectures typically aim for 24GB to 32GB of GDDR7 memory. This provides massive bandwidth but still requires strategic quantization for massive models.

Is the RTX 5090 good for local LLMs?

Yes, it is exceptionally powerful for local LLMs. Its next-generation architecture and memory bandwidth allow for incredibly fast token generation, provided your model fits entirely within the available VRAM footprint.

Can the RTX 5090 run a 120B parameter model?

A single RTX 5090 cannot run a 120B parameter model without severe offloading. Even with heavy 4-bit quantization, a 120B model requires upwards of 70GB of VRAM, necessitating a multi-GPU setup.

What are the RTX 5090 VRAM requirements for training?

Training or fine-tuning (LoRA/QLoRA) is vastly more memory-intensive than inference. You will need significantly higher VRAM buffers to store optimizer states and gradients, often pushing requirements 2x to 3x higher than standard inference workloads.

How does RTX 5090 compare to previous generations for AI?

It represents a massive leap in memory bandwidth and tensor core performance. The ability to process data faster prevents the compute units from sitting idle, directly translating to higher tokens-per-second in AI applications.

Do I need NVLink for RTX 5090s?

If you intend to pool the VRAM of multiple RTX 5090s to run models exceeding a single card's capacity (like 70B+ models), high-bandwidth interconnects are critical. Without it, PCIe bottlenecks will severely degrade performance.

What power supply is needed for RTX 5090 AI setups?

Continuous AI tensor workloads demand robust, highly stable power. A high-quality ATX 3.0 power supply of at least 1000W to 1200W per card is strongly recommended to handle transient spikes safely.

Is 32GB VRAM enough for local AI models?

Yes, 32GB is plenty for running highly quantized 30B to 35B models or running smaller 8B models with massive context windows. It hits a "sweet spot" for developers balancing cost and capability.

How to optimize LLMs for RTX 5090?

Optimization relies heavily on quantization (GGUF, EXL2), utilizing optimized backends like vLLM or TensorRT-LLM, and strictly managing your KV cache sizing to ensure weights never spill over into system memory.

What is the RTX 5090 tensor core performance?

Next-generation tensor cores provide exponential increases in dense and sparse matrix multiplication. This raw FLOPS output is what allows the card to generate tokens at enterprise speeds when fed adequately by the memory bus.

Sources & References

- ISO/IEC 5259-2: Data quality for analytics and machine learning — Part 2: Data quality measures.

- IEEE Standard for Machine Learning Hardware Architectures (IEEE 2976-2024).

- National Institute of Standards and Technology (NIST) Special Publication on Local AI Deployment Constraints.

Maximizing your AI hardware budget means moving beyond marketing hype and respecting the hard physics of memory bandwidth. By mapping your exact VRAM requirements, leveraging smart quantization, and ensuring your infrastructure complies with standards like ISO/IEC 5259-2, you can build a highly performant, fully local AI pipeline.