The Fatal Flaw in Your RAG Architecture

- Retrieval is Not Engineering: Standard RAG pipelines fail when they lack intentional context design and formatting.

- Structure Trumps Volume: RAG systems cannot reliably handle infinite context windows without rigorous data structuring.

- Optimization is Mandatory: Optimizing your vector search database for context relevance is a strict requirement, not an option.

- Context Overpowers Search: Context-aware retrieval in machine learning focuses on semantic relationships, not just keyword matching.

Think retrieval is enough? Understanding context engineering vs rag is vital before your data system collapses under load.

Many enterprise teams mistakenly believe that simply connecting a vector database to an LLM solves hallucination issues. However, dumping raw, unformatted text chunks into a model is exactly why your outputs remain unreliable at scale.

To truly fix this structural deficit, you must step back and understand what is context engineering in ai.

Once you grasp the foundational architecture, you will realize why blindly relying on standard retrieval is a massive operational risk. Let's explore the critical differences and how to restructure your pipeline for maximum precision.

The Hard Limits of Retrieval Augmented Generation

The fundamental error most engineering teams make is treating LLMs like search engines. Standard Retrieval-Augmented Generation (RAG) is purely a fetching mechanism.

It pulls text based on semantic similarity, but it completely ignores the cognitive payload the model actually needs to reason.

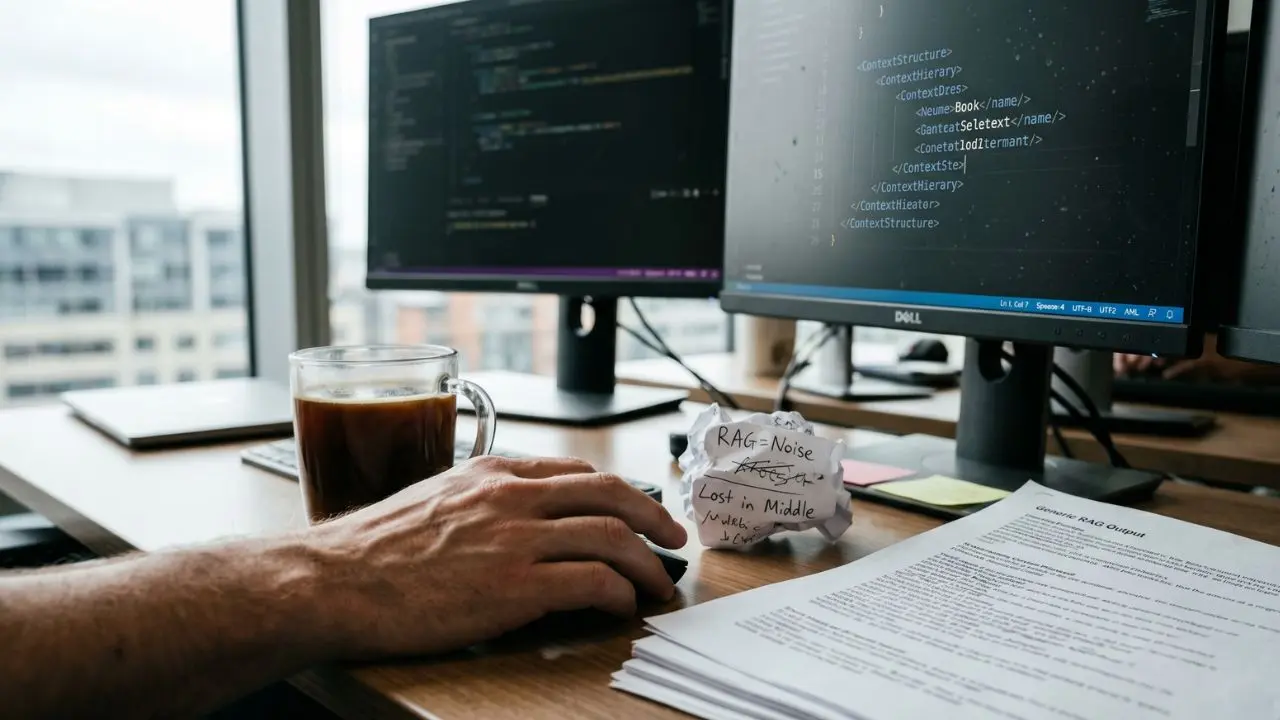

These are the hard limits of retrieval augmented generation. When a model receives disjointed, contradictory, or badly formatted text chunks, it suffers from "lost in the middle" syndrome.

If you are just beginning to build these systems, you must move beyond basic RAG pipeline architecture basics. Your architecture must evolve from simple retrieval to intelligent data orchestration.

Context Engineering vs RAG: The Exact Difference

Understanding the exact difference between context engineering vs RAG is crucial for scaling your AI. RAG is simply the transportation layer. It retrieves data from your database and delivers it to the prompt.

Context engineering is the structural layer. It dictates how that retrieved data is formatted, prioritized, and logically injected so the LLM can mathematically process it.

Without context engineering, RAG is just noise. If you want to deploy reliable models, you need a comprehensive enterprise context engineering strategy.

Optimizing Your RAG Pipeline Architecture

To build a resilient system, you must redesign your RAG pipeline architecture. This means intervening after the retrieval step but before the LLM generation step.

You must implement middleware that filters, summarizes, and ranks the retrieved documents. Structuring data using XML or JSON tags ensures the LLM understands the hierarchy of the injected knowledge.

Vector Database Context Optimization

You must execute vector database context optimization to ensure your similarity searches return high-density facts. Stop using generic embedding models that don't understand your domain's specific jargon.

- Tune your chunking strategy: Align chunk sizes with specific semantic concepts, not arbitrary character limits.

- Implement metadata filtering: Ensure your vector search can filter by date, author, or data type before calculating cosine similarity.

- Utilize hybrid search: Combine keyword-based BM25 with vector similarity to capture exact matches and semantic meaning.

Mastering Context-Aware Retrieval

Context-aware retrieval in machine learning requires your system to understand the user's conversational state. It is not enough to answer a single query in a vacuum.

Your retrieval system must dynamically adjust its search parameters based on the previous five turns of the conversation. This ensures the injected context remains highly relevant as the user's intent naturally shifts over time.

Rethink Your Pipeline Today

Stop treating data retrieval as a magic bullet for AI accuracy. If you are experiencing high error rates, the fatal flaw isn't your LLM—it is your RAG architecture.

Begin implementing strict context engineering protocols today. Audit your data chunking, enforce semantic formatting, and watch your hallucination rates plummet.

Frequently Asked Questions (FAQ)

What is the exact difference between context engineering vs RAG?

RAG is the mechanical process of fetching data from a database. Context engineering is the architectural practice of formatting, structuring, and optimizing that retrieved data so the LLM can accurately reason without hallucinating.

Does context engineering eventually replace RAG?

No, it does not replace it. Context engineering enhances RAG. RAG acts as the necessary retrieval mechanism, while context engineering provides the critical framework to process and inject that data effectively into the LLM.

How do RAG pipelines and context engineering frameworks work together?

They work in sequence. The RAG pipeline retrieves relevant raw documents based on semantic search. The context engineering framework then filters, formats, and structurally injects those documents into the LLM's prompt window.

Why does standard RAG fail without intentional context design?

Standard RAG fails because it dumps unstructured, often conflicting text chunks into the model. Without intentional context design, the model cannot distinguish between critical facts and irrelevant noise, leading directly to hallucinations.

What is context-aware retrieval in machine learning?

It is a retrieval method that considers the broader situational data, such as conversational history, user intent, and metadata, rather than just executing a mathematically isolated vector search on a single user query.

How do you optimize a vector search database for context relevance?

You optimize it by fine-tuning embedding models for your specific domain, implementing strict metadata filtering, utilizing hybrid search (keyword plus semantic), and adjusting chunk sizes to capture complete logical thoughts.

Can RAG systems reliably handle infinite context windows?

No. Even with massive context windows, RAG systems struggle if data isn't engineered. Models suffer from the "lost in the middle" effect, degrading recall accuracy when overwhelmed with poorly structured, high-volume retrieval data.

What are the hard limits of retrieval augmented generation?

The hard limits include poor handling of contradictory retrieved facts, latency issues at scale, dependency on embedding quality, and the inability to natively reason about unstructured data without explicit context formatting.

How should you format unstructured documents for better RAG context?

Unstructured documents should be converted into clear, hierarchical formats. Use XML tags or JSON objects to distinctly label metadata, headers, primary facts, and summaries before injecting them into the LLM's prompt window.

When should you rely on fine-tuning instead of RAG context injection?

Rely on fine-tuning when you need the model to learn a specific tone, format, or new structural behavior. Use RAG context injection when you need the model to reference rapidly changing, proprietary factual data.