This blog is part of Agentic AI Product Management .

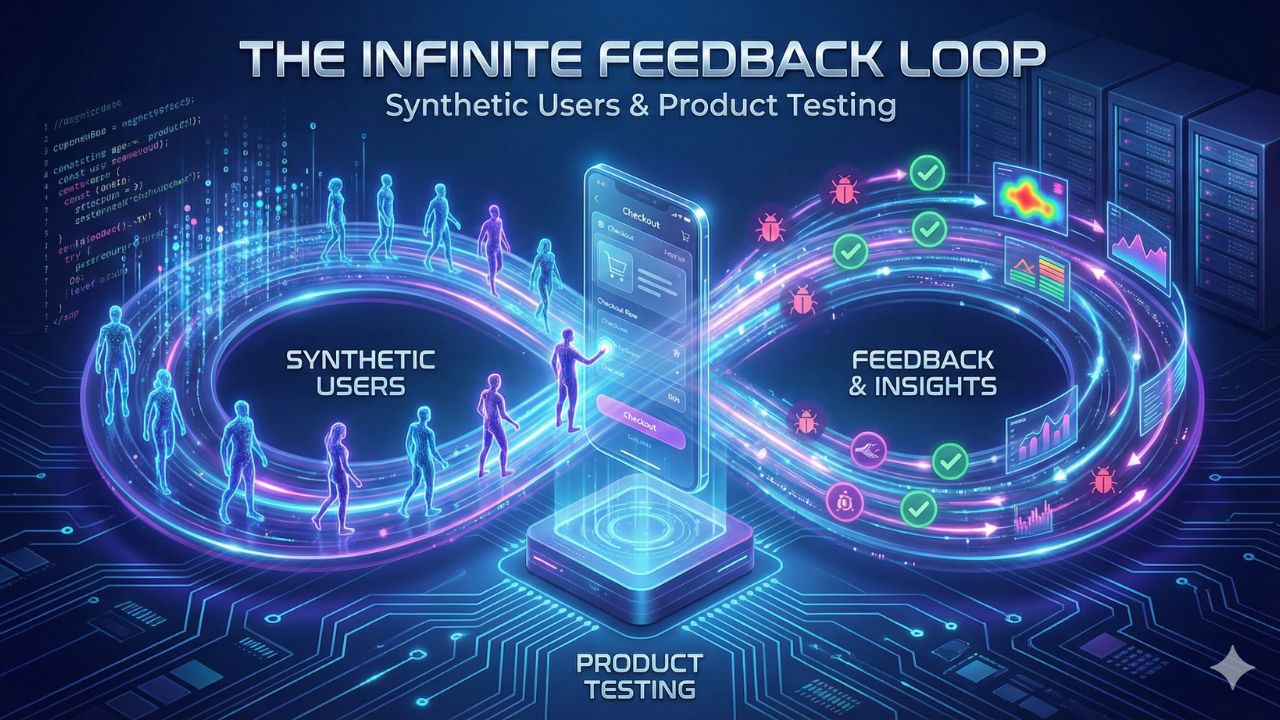

The Infinite Feedback Loop: Synthetic Users & Product Testing

For whom: Growth Product Managers, QA Engineers, and UX Researchers.

The rise of autonomous AI agents and generative models has introduced a paradigm shift in how we test products. We are moving from the era of "Agile," where we iterate based on human feedback, to the era of "Agentic," where software autonomously validates software.

Instead of waiting for real users to log in, interact, and eventually complain, Product Managers can now deploy Synthetic Users—AI agents engineered to interact with the product and generate zero-latency, infinite feedback. This guide explores how to build this infrastructure and why it represents the single biggest leap in product velocity since the introduction of CI/CD.

The Synthetic Evolution: Automated Discovery

In the past, user research was a bottleneck. It was slow, expensive, and logistically painful. You’d recruit participants, run a focus group or an A/B test, gather data, and wait weeks for insights. By the time the data was analyzed, the product context had often shifted.

Synthetic users change the geometry of the product loop by offering continuous, automated product discovery. These are not static scripts; they are LLM-driven agents capable of reasoning, planning, and reacting to your UI just as a human would.

Simulated Focus Groups: The Persona Matrix

Synthetic users allow PMs to move beyond static, two-dimensional personas tacked onto a wall. By coding demographic variables (age, location, income) and behavioral vectors (risk aversion, patience level, tech literacy, financial anxiety), you can create an N-dimensional Persona Matrix.

Consider the complexity you can model:

- The Impatient Power User: An agent programmed with high tech-literacy but extremely low patience (simulated by a high "temperature" for erratic behavior if load times exceed 500ms).

- The Hesitant Novice: An agent that reads every tooltip, hesitates on payment screens, and abandons the cart if trust signals are missing.

- The Fraudulent Actor: An agent explicitly instructed to attempt SQL injections, use stolen credit card patterns, or exploit promo codes.

Scaling the "Unscalable"

The matrix allows for massive scalability in scenarios that were previously unscalable:

- Hyper-Scale Simulation: Deploy hundreds of "users" in minutes to stress-test your backend or simulate a launch in a new Tier 2 city in India, complete with network latency simulation.

- Deterministic Repetition: Run the exact same sequence of actions a million times to isolate intermittent bugs that are impossible for humans to find (the "Heisenbugs").

- Edge Case Discovery: The core value of a synthetic user is not just speed, but the ability to test edge cases—the 1% scenarios that would take months or years to find in a live environment.

"The core value of a synthetic user is not just speed, but the ability to test edge cases—the 1% scenarios that would take months or years to find in a live environment."

The Anatomy of a Synthetic User

To understand how to implement this, we must look at the architecture of a synthetic user agent. It is not merely a script; it is a cognitive architecture composed of four distinct layers:

- The Persona Layer (System Prompt): This defines the "who." It includes the bio, the goals, the constraints (e.g., "You are a senior citizen with poor eyesight and a budget of $50").

- The Memory Layer (Vector Database): This gives the agent context. It remembers past interactions with your app. If the agent failed to login yesterday, it "remembers" that frustration today.

- The Action Layer (Tools): These are the APIs or browser automation tools (like Selenium or Playwright) that allow the agent to click, scroll, type, and navigate.

- The Observation Layer (Multimodal Vision): Using Vision-Language Models (VLMs), the agent "sees" the screen. It doesn't just look for code selectors; it looks at the pixels, identifying if a button is obscured or if text has low contrast.

From QA to Autonomous Validation

Synthetic users eliminate the waiting time between development and validation, collapsing the time-to-market. This moves us from "Quality Assurance" (checking if it works) to "Autonomous Validation" (checking if it brings value).

Continuous, Zero-Latency Feedback

The Synthetic Evolution replaces the traditional feedback loop (Develop → Deploy → Wait for Users → Analyze) with a Self-Correcting, Always-On loop. This is crucial for modern DevOps: Code changes are instantly validated against a synthetic environment that mirrors production data.

- A/B Testing with Agents: Before rolling out a new feature to 5% of your user base, run it against 5,000 synthetic agents. You can predict the winner with high confidence based on the agents' goal-completion rates.

- Self-Healing Tests: Traditional automated tests are brittle; if a developer changes a CSS class ID, the test fails. Modern AI-powered testing tools use synthetic data to automatically repair tests. If the "Buy" button changes color or ID, the agent infers it is still the "Buy" button based on context and updates the test script automatically.

- Chaos Engineering: unleash agents with "chaotic" directives. Tell them to double-click every button, use emojis in name fields, and disconnect the internet mid-transaction. This hardens the product against real-world unpredictability.

Validation Beyond Function: Visual Regression

A key application is Visual Regression Testing. Traditional tests confirm that a button functions (clicks trigger events). Synthetic testing confirms that the button looks correct and is rendered consistently across hundreds of devices and browsers.

Using AI that simulates human vision, these tests can flag issues like:

- Layout Shifts: Elements overlapping on smaller screens.

- Brand Compliance: Incorrect hex codes or font weights that don't match the design system.

- Accessibility Violations: Automatically detecting if color contrast ratios drop below WCAG standards for agents simulating visual impairments.

The Economics of Synthetic Testing

Implementing synthetic users is an investment, but the ROI calculation is distinct from traditional tooling. It shifts cost from OpEx (Operational Expenditure—hiring more QA or researchers) to Compute.

| Factor | Traditional User Research | Synthetic User Testing |

|---|---|---|

| Cost | High (Recruiting, Incentives, Time) | Low (API Token costs, Compute) |

| Time to Insight | Weeks (Planning to Analysis) | Minutes (Zero-Latency) |

| Sample Size | Small (n=5 to n=50) | Infinite (n=1,000+) |

| Bias | Human Observer Bias | Training Data Bias (Model Bias) |

The Human Element: Limitations & Ethics

While powerful, synthetic users are not a panacea. It is vital for Product Managers to understand the "uncanny valley" of AI testing.

1. Emotional Nuance: AI agents can simulate frustration, but they do not truly *feel* it. They cannot replicate the serendipitous discovery of a new use case that a human might stumble upon. Real users are essential for discovering unanticipated needs and validating true Product-Market Fit (PMF).

2. Model Hallucination: An agent might report a bug that doesn't exist because it "hallucinated" an error message, or conversely, it might successfully navigate a broken UI because it "guessed" the next step better than a confused human would.

3. The "Average" Trap: If your synthetic users are based on "average" training data, you risk optimizing your product for a generic mean, smoothing out the unique quirks that might actually delight your specific niche audience.

Resources & References

Further reading on Synthetic Data and Autonomous Testing:

- Synthetic Data 101: What is it, how it works, and what it's used for (Syntheticus)

- How to Analyze Focus Groups: A Step-by-Step Guide (BTInsights)

- Digital Twins: Can They Transform Customer Experience? (CMSWire)

- How AI in Visual Testing is Evolving (BrowserStack)

Related Modules

This guide is part of the broader Agentic Product Management curriculum. Explore related pillars: