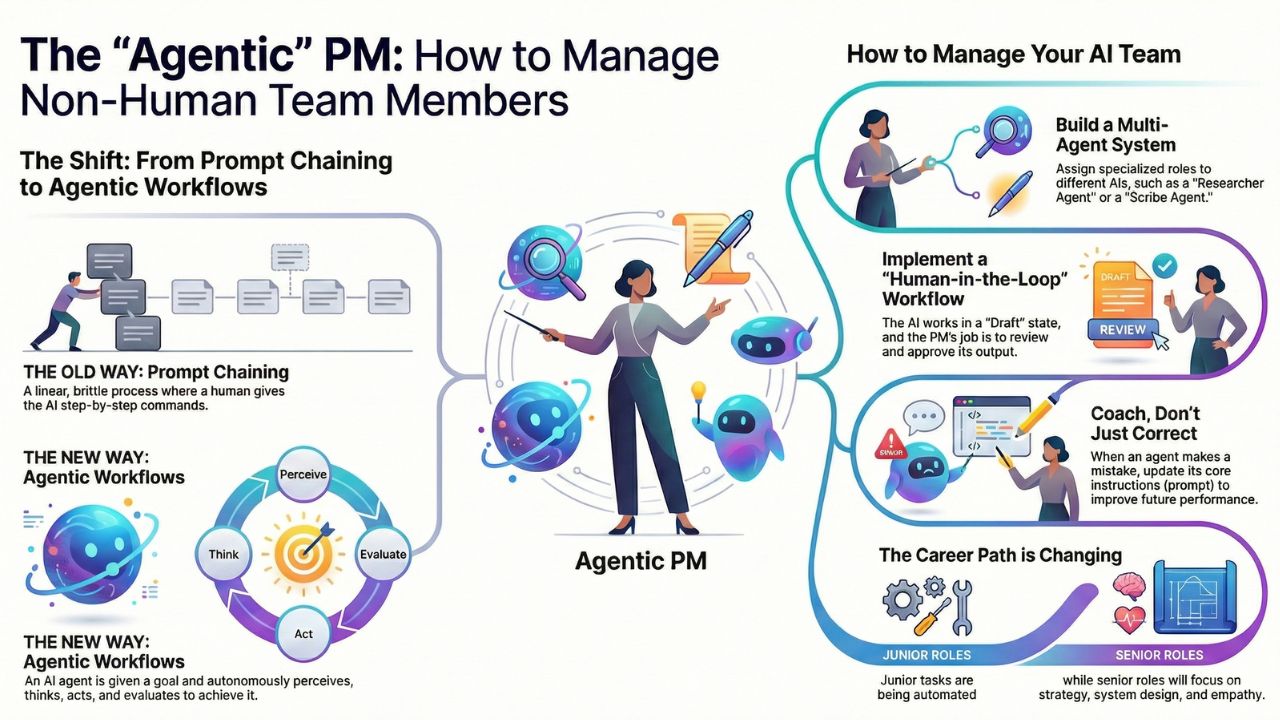

The "Agentic" PM: How to Manage Non-Human Team Members

What's New in This Update

- Metrics overhaul: Added specific guidance on moving from output-based KPIs to measuring Task Completion Rates.

- Tooling shifts: Expanded the org chart section to reflect the 2026 standard of specialized, single-purpose agents over massive monolithic LLMs.

- Risk frameworks: Detailed the "Feedback Loop from Hell" and constitutional governance models used by top enterprise teams.

TL;DR: The Agentic Transition

- The shift: Product Managers no longer write every ticket; they build multi-agent orchestration workflowsthat do the work autonomously.

- Prompt vs. Agent: Prompting is linear. Agents operate on a continuous loop of Perceive → Think → Act → Evaluate.

- The feedback loop from hell: Unsupervised agents compound errors. Human validation checkpoints are mandatory.

- Career survival: Junior execution roles are vanishing. Securing a role requires mastering systems design, logic flows, and constitutional AI governance.

In the past, a "hands-on" Product Manager wrote every Jira ticket, manually aggregated customer feedback from Zendesk, and personally updated the executive roadmap. In 2026, being "hands-on" means orchestrating a fleet of autonomous AI agents that execute these operational tasks for you.

We have entered the era of agentic product management. The role has shifted fundamentally from doing the granular work to designing the system that does the work. Your "team" now includes highly specialized AI agents that require onboarding, performance reviews, and strict governance just like any human employee.

1. Prompt Chaining vs. Agentic Workflows

To manage this non-human workforce, you must understand the underlying technical architecture. There is a critical difference between a simple conversational "prompt chain" and a true "agentic workflow." Treating an agent like a glorified chatbot will bottleneck your entire product operations process.

Prompt Chaining (The Legacy Method)

This is a linear, brittle process. You instruct the AI to execute Step A, then pass the output to Step B. If Step B fails due to an unexpected API response, the chain breaks completely. It requires constant human supervision and rigid prompt engineering.

Agentic Workflows (The 2026 Standard)

An agent is given an objective, a set of tools, and the autonomy to figure out how to bridge the gap. It operates on the ReAct (Reason + Act) loop: Perceive → Think → Act → Evaluate.

For example, instead of telling an AI, "Summarize this transcript and paste it here," you instruct an agent: "Update the Q3 roadmap based on this week's enterprise sales calls." The agent autonomously reads the transcripts, identifies feature requests, checks the existing roadmap database, identifies scheduling conflicts, and drafts a proposed solution. It loops internally until it satisfies its system constraints before presenting the draft to you.

2. The 2026 Product Org Chart: Multi-Agent Systems

Organizational design for the AI era requires a complete rethink of team structure. You are no longer just managing up to stakeholders; you are managing "sideways" within a multi-agent system. Relying on one massive model to do everything causes severe context degradation. Instead, successful PMs deploy a stack of specialized AI agents, each holding a distinct job title.

Here is what your digital team looks like today:

- The Researcher Agent: Scrapes competitor pricing, monitors App Store reviews, and updates a dynamic comparison table daily. This serves as a proxy for conducting continuous synthetic usertesting.

- The Scribe Agent: Connects to Zoom, transcribes the daily standup, identifies blockers, drafts follow-up Jira tickets, and pushes updates to Slack.

- The Data Analyst Agent: Monitors product telemetry (Mixpanel/Amplitude) and alerts you only when core KPIs deviate beyond defined standard deviations.

- The QA Agent: Parses the Scribe's drafted PRDs, cross-references them against compliance databases, and flags missing edge cases before a human engineer ever sees the ticket.

The core skill of modern product management is Agent Orchestration—ensuring these entities talk to each other correctly. You cannot allow the Researcher Agent to unilaterally push a feature request that the Data Analyst Agent knows will tank your retention metrics.

Read Next: Want to know how to set the exact boundaries for these agents? Check out our guide on constitutional governance and guardrails.

3. Human-in-the-Loop (HITL) & The Feedback Loop from Hell

The single greatest risk in delegating operational tasks to AI agents is the "set it and forget it" mentality. When autonomous systems interact without human oversight, you risk entering the "Feedback Loop from Hell."

Imagine the Researcher Agent hallucinates a customer pain point. The Strategy Agent reads that output and reprioritizes the roadmap. The Developer Agent begins generating boilerplate code for a feature no human actually requested. By the time a human checks the repository, thousands of dollars in compute and engineering hours have been wasted.

To prevent this, you must implement strict human-in-the-loop workflows:

- The "Draft" State Constraint: Agents must never commit directly to production databases, live code, or public-facing roadmaps. All outputs must terminate in a "Draft" or "Proposal" state.

- The Review Gate: The Product Manager's job transforms from an author into a high-speed editor. You are checking outputs for strategic alignment, brand voice, and empathy—the nuanced traits machines lack.

- Dynamic Coaching: When an agent hallucinates, you don't just manually correct the text file. You trace the error back to the system prompt and rewrite the instructions to prevent recurrence. This is the equivalent of coaching your non-human team member.

4. Performance Reviews for Non-Human Team Members

If agents are team members, how do you measure their performance? You can no longer rely on traditional volume metrics. An agent generating 50 user stories an hour is useless if those stories cause merge conflicts down the line.

Instead, technical product leaders must evaluate agents based on the agent observability vs evaluation difference, focusing heavily on two specific KPIs:

- Task Completion Rate: What percentage of the time does the agent successfully reach its terminal goal without throwing an error, timing out, or requiring a human to manually intervene in the workflow?

- Tool Use Correctness: When the agent attempts to fetch data, does it call the correct API endpoint? Does it format the JSON payload flawlessly, or does it hallucinate parameters?

5. The Future PM Career Path: The "AI-Augmented" Leader

What does the product management career path look like going forward? The traditional "Junior PM" or "Scrum Master" role—heavily indexed on ticket grooming, backlog sorting, and meeting transcription—is rapidly disappearing. The new entry-level foundation is the "AI Ops Specialist," a professional who designs, deploys, and maintains these agentic workflows.

To successfully get hired as an AI Product Manager, your focus must shift from execution to strategic systems design. You will be judged on:

- Strategic Vision: Can you spot market opportunities and behavioral shifts that historical training data cannot predict?

- System Architecture: Can you build a more efficient, autonomous "product factory" than your competitor?

- Human Empathy: Can you navigate complex stakeholder politics, negotiate resources, and connect with human users in a way that silicone cannot replicate?

The future belongs not to the PM who can write the best PRD, but to the PM who can orchestrate the best system to write, test, and validate that PRD while they focus on market strategy.

Frequently Asked Questions (FAQ)

Q1: How do I "fire" an AI agent that isn't performing?

In a multi-agent system, "firing" an agent means deprecating its API access, rewriting its system prompt from scratch, or swapping the underlying foundational model (e.g., migrating from GPT-4o to Claude 4.6). Just like a human employee, if an agent consistently fails to deliver quality output despite coaching, you replace it with a better model or different tool configuration.

Q2: Will "Agentic PMs" need to know how to code?

You do not need to write production Python or Java, but you must master context engineering and boolean logic flows. Understanding how an agent routes tasks, parses API JSON responses, and executes if/then/else loops is the new programming for product managers.

Q3: What is the biggest danger in autonomous product workflows?

The "Feedback Loop from Hell." If a research agent hallucinates a customer pain point, and the strategy agent updates the roadmap based on that hallucination, engineering builds a useless feature. Human-in-the-loop (HITL) validation checkpoints are non-negotiable.

Q4: How do we measure the performance of an AI agent?

Shift away from traditional output volume. Measure agents using Task Completion Rate (does it finish the workflow without a human intervening?) and Tool Use Correctness (does it call the right API with the correct parameters?).

Q5: Are traditional Product Owner roles dead?

The purely administrative portions of the role—writing user stories, grooming backlogs, taking standup notes—are easily automated by agents. Traditional POs must transition to AI Ops Specialists or strategic Product Managers to remain relevant.

Related Resources

- The AI Product Manager: The Complete Guide – The central pillar page.

- 5 AI Agents Every Product Manager Needs in 2026 – The software stack.

- How to Build a Synthetic User Focus Group – Practical agentic workflow example.

- 50+ Copy-Paste Prompts for Product Managers – Your training manual for agents.